How I learned to code with AI and built graph.electrafrost.com

Making my professional and intellectual provenance open source and interactive on the semantic web, as a discoverable knowledge asset for humans and AI to find.

Discoverability is the precursor to agentic procurement: AI agents searching for qualified advisers on behalf of clients can only find what is open online, machine-readable and verifiable.

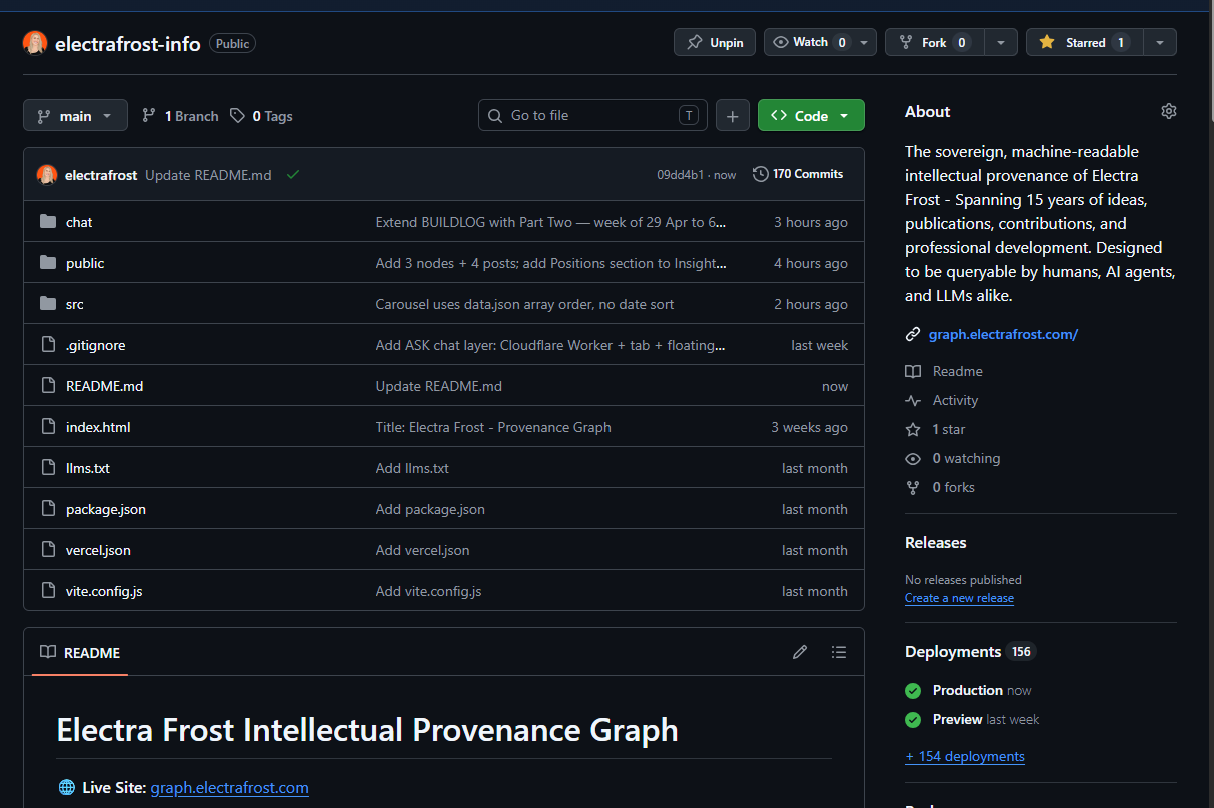

graph.electrafrost.com is live: aggregating over a decade of professional development and ideas stored across a bunch of closed cloud servers, now structured and queryable, on my own domain in GitHub.

Still an incomplete data-set, it’s a work-in-progress that so far contains 14 intellectual eras, 58 thesis nodes, 88 verified CPD records, 662 indexed social posts spanning 2018 to 2026, an ASK chatbot grounded in the corpus, and an admin loop where every visitor question can become a corpus improvement.

I built it end to end from the command line, with Claude as a pair-programmer, on Cloudflare’s free tier. The total marginal cost is a few dollars a year.

Some will say this is not really coding because Claude wrote lines for me. Architecting a system, picking a stack, debugging at the DNS level, running live database migrations, owning every error, and shipping to production is what coding means in 2026.

So I'm now a technical (rather than non-technical) builder. Which other accountants are doing this? I want to find you.

Why I built it myself

“It is by forging that one becomes a blacksmith.” - French proverb

Accountants will not earn the right to govern frontier AI by reading about it. We earn it by building tools. What you ship and share with the world is your true signal of professional capability now.

Degrees, diplomas, continuous professional development certificates, designations, and LinkedIn headlines are claims about your work. Intellectual provenance built on those claims, hosted on your own domain and queryable in your own voice, is your work made visible and active.

A professional credential issuer asked for my CV which is not a fair format to reflect 20 years of self-employment and transdisciplinary professional development. I wondered: how would an accounting technologist write their CV?

Instead of a CV, I wrote this:

Learning to govern AI, not just outsourcing my work to AI:

I deliberately used Claude in chat as my pair-programmer, not Claude Code or Cowork. Claude Code would have built the system for me. Cowork would have automated the file operations. Chat required me to type every command into PowerShell, paste every error back, analyse and own every diagnostic step.

Friction was the curriculum. Without doing the work myself, I could not have learned to recognise when AI gets it wrong, which is the skill I need as a professional builder.

The technical journey

Six weeks ago, RAG and vector embeddings were terms I vaguely understood. Today I can sketch the architecture for an engineer, and use Github properly!

Concepts I can now apply to a professional use case:

RAG (Retrieval-Augmented Generation): the architectural pattern that grounds answers in a corpus, rather than generating from training data alone.

Vector embeddings and cosine similarity: how language gets encoded as 768-dimensional vectors, and how “closeness in meaning” becomes a number between 0 and 1.

Chunking strategy: how to split a corpus so meaning is preserved. Bad chunking poisons retrieval.

System prompts and instruction following: how strictly the model obeys the rules you write for it. This is why I upgraded from Haiku 4.5 to Sonnet 4.6 to get FAQ-strict mode behaving.

Retrieval thresholds as editorial judgments: the choice of what counts as a confident answer is an editorial decision with public-interest weight.

Anti-fabrication and anti-conflation: the discipline of stopping the model from stitching plausible answers from adjacent material.

The stack I can name and operate:

Cloudflare’s edge platform: Workers, Vectorize, Workers AI, D1.

Anthropic API: generation via Claude Sonnet 4.6.

Vercel: frontend hosting.

GitHub: source control.

Local toolchain on my Windows machine: Wrangler, Node.js, PowerShell, Git, Bash.

I can trace a question from someone’s browser tab in Brisbane to Cloudflare’s edge in Kuala Lumpur to Anthropic’s API in San Francisco and back.

Engineering problems I overcame:

The Anthropic billing separation: claude.ai subscription credits and console.anthropic.com API credits are two completely separate billings. I had $155 sitting in the wrong product before I realised they don’t talk to each other.

A PowerShell pipe variable scope issue that silently uploaded an empty Anthropic API key.

A hidden git divergence from a notepad edit that was never committed. Four-step dance to resolve: stash, pull, stash pop, resolve the conflict, commit.

A GitHub token revoked by automatic secret-scanning when it appeared in a screenshot. I generated a fresh fine-grained token with the right repository scope.

Vim opened by an unexpected merge commit, twice. I escaped each time with

:qaand:q!.An ISP-level DNS block on

*.workers.devthat made my bot work for everyone in the world except me. I rannslookup, proved my ISP was rewriting the address to 127.0.0.1, and switched DNS to 1.1.1.1.An accidental file-shuffle that moved

worker.mjsandschema.sqlinto the wrong folder, breakingwrangler deployuntil I moved them back with PowerShell’sMove-Item.A schema migration on a live SQLite database with eight rows already in it, applied with

ALTER TABLErather than rebuilding.The ASK bot’s first fabrication: a Bitcoin origin story, in my voice, stitched from my own posts across different eras into something that never happened. I caught it because I have ground truth. I also knew there were Bitcoin-thesis gaps in the corpus. That moment was a public-interest argument for accountant-curated AI provenance that I had to own.

Watch out, techies - accountants are detail oriented techies too!

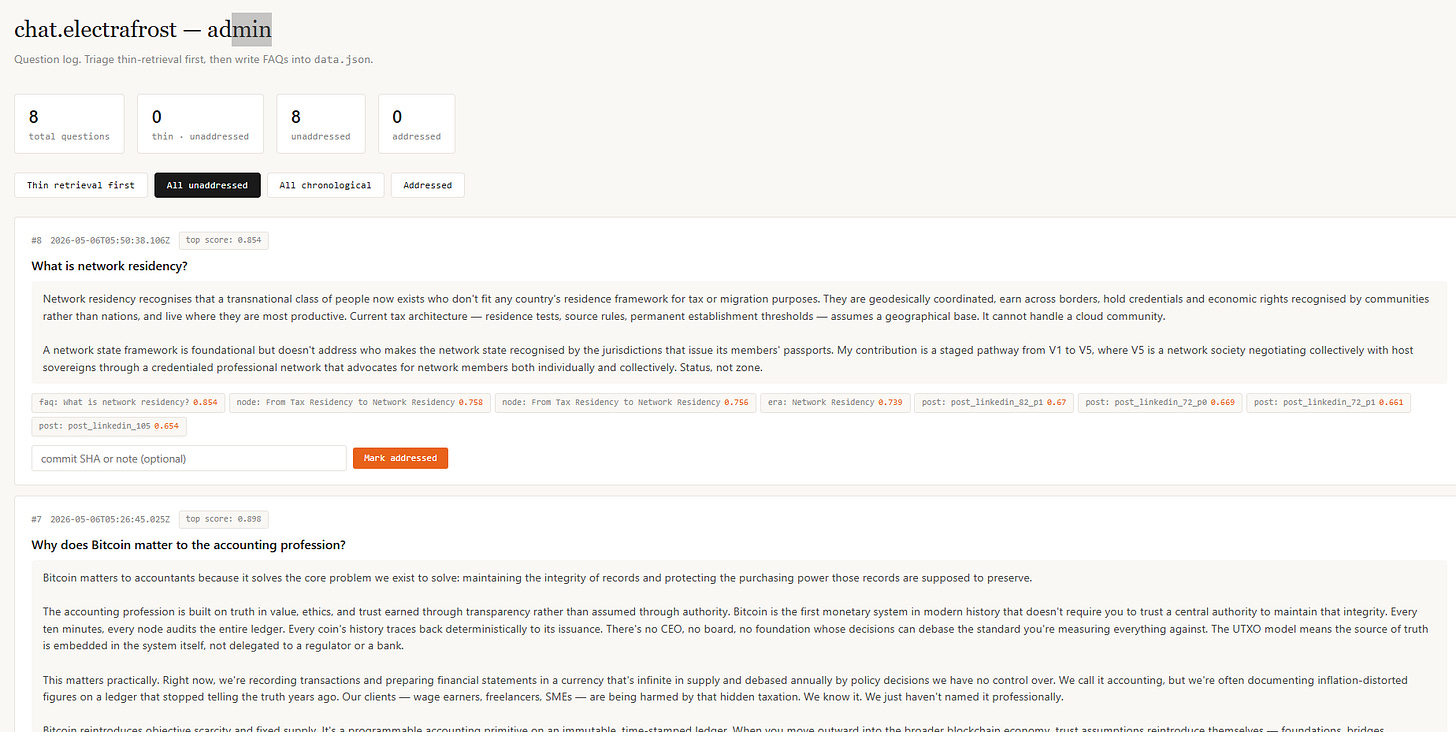

The admin backend to make my work interactive

I am most proud of this admin feedback loop I designed with Claude’s help.

It turns the system from a published artefact into an interactive knowledge-seeking and sharing corpus. If you met me at a conference what would you want to learn from me? Don’t wait. Just ask my graph!

A visitor opens the ASK tab and types a question, or starts by clicking one of the showcase questions seeded there.

Their browser sends the question to a Cloudflare Worker running at the edge. The Worker calls Cloudflare Workers AI to embed the question into a 768-dimensional vector.

It queries Cloudflare Vectorize for the eight most similar corpus chunks by cosine similarity.

It sends those chunks, the question, and a strict system prompt to the Anthropic API (Claude Sonnet 4.6) for generation. The answer returns with citation pills pointing back into the graph.

Importantly, the visitor sees the AI-generated answer and is shown how faithfully it draws on my knowledge corpus.

The corpus itself is still being cleaned of AI-slop, which takes time. But this feature is important - the citation pills show which chunks the answer used. Strict-FAQ mode kicks in when retrieval score is at or above 0.85, so canonical answers appear confidently.

When retrieval is thin, the visitor sees that too, and I am alerted to develop more content on what people want to know. The bot is a guided tour of what I have actually said, and lets my querents prompt me to produce more sought-after content.

A querent could even be a bot that needs to buy a credible opinion... that's where it gets interesting!

Behind the scenes, the Worker logs every question and answer to a Cloudflare D1 SQLite database, including the top retrieval scores, the chunk IDs that matched, and a thin_retrieval flag set when the top score falls below 0.6.

My admin panel has four views: thin-retrieval-first (the gaps in my corpus, surfaced by visitors), all unaddressed, all chronological, addressed. A showcase and visitor split separates curated questions from live traffic. A mark-addressed workflow ties each gap to the commit SHA where I patched the corpus.

When someone asks a question my corpus cannot answer well, I see it. I write a canonical FAQ. I commit, push, re-run the embedder.

The next visitor asking a similar question gets a grounded answer at over 0.85 similarity. The corpus learns from demand.

That way people can query my views, through an open source and interactive design.

What comes next

I’ve made two commitments, both flowing directly from building this thing.

The first is automation. Right now I add posts and CPD entries by hand, then re-run the embedder. In the next iteration, each Substack publish, each LinkedIn post, and each verified CPD event will flow into the corpus automatically, with the evidence hashed (SHA-256) and anchored on Bitcoin.

The design is vendor-neutral and the durability is independent of any provider. The professional body becomes one issuer among many, evaluated on the persistence of its attestations.

The second commitment is a V1 for CREDU, starting with a fork-and-deploy template every accountant can run.

CREDU V1 will live in three repos under credu-protocol:

the spec,

the professional-graph-template, and

the federation registry.

Entry is current membership of an IFAC-recognised professional body. Once admitted, CPD and publications anchor on Bitcoin. My graph.electrafrost.com is the prototype for credu.electrafrost.com which will be the first reference node.

Every dead end I hit on this build helps refine the template to abstract away friction for the next accountant. My effort will save my peers millions of unpaid hours at scale.

Individual members of the accounting profession who are now embracing AI, Bitcoin and converging technologies need to be seen for what they can do, what they have achieved, and what they have to offer into the future.

We cannot be fully seen and trusted if we are not online in our own right, marketing our unique and verifiable talents for GEO in our own voices, on our own domains.

I built mine.

Open the bot. Ask Electra something. CREDU is next! Then it’s your turn.

Electra Frost is a Chartered Tax Adviser and Fellow of the Institute of Public Accountants, with 25 years of specialist public practice experience with innovative and creative businesses, using Bitcoin and open blockchains since 2013. Founder/facilitator of CREDU academy and systems (a new proof of concept now in development). Deputy President, IPA Malaysia Member Advisory Committee. Based at Network School, Forest City, Malaysia.

Full profile: graph.electrafrost.com